Computer Vision and Rubik's Cube

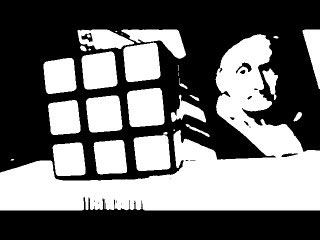

The idea is to hold a face of a Rubik's cube in front of a webcam and the computer vision algorithm detects the position of the face within the webcam picture and identifies the facelet stickers together with their colors. Subsequently the program solves the cube with the two-phase-algorithm.

This recognition task is so simple for the human eye that you usually will not waste a single thought on this - but if you try to implement this as a computer algorithm it takes some effort to get a reasonable result. I use the opencv framework and Python in my project and got good results on a PC with an USB webcam and also with the Raspberry Pi and its non-USB camera module.

You can download the project here:

https://github.com/hkociemba/RubiksCube-TwophaseSolver

And here is an example of the solver in action, and here another one.

To detect the facelets of a cube face I gave the Canny edge detection a try. But the results were not satisfactory. So I tried another approach. The characteristics of a cube facelet is that it is a quadrangle with a more or less constant color.

|

|

|---|

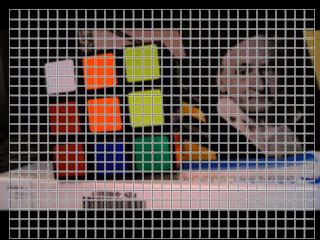

To detect such regions we superimpose a grid on the picture and compute for each grid square the standard deviation of the hue values of it's pixels. Regions of interest are those grid squares where the standard deviation of the h values in the hsv color space is sufficiently small (this indicates colored facelets) - or where the s value is low and the v value is high (this indicates white facelets).

Each of the "interesting" grid squares (we do this separately for white and color) then is extended to a 3x3 square with the "interesting" square in the center. For the 3x3 square we then create a binary masks where we select (filter) all pixels with similar properties to the center square.

All these 3x3 masks are ORed together and in this way we get a white_filter mask and a color_filter mask for the complete grid. Essentially we then search for square-shaped objects in these two masks.

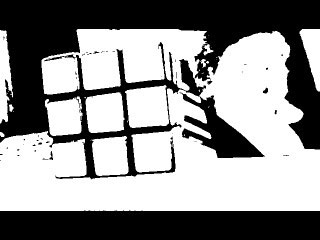

It is possible to get better results if we AND each of these masks with the "black_filter mask" to mask out all dark pixels because these are never part of a cube facelet (so black stickers are not supported).

Though it is desirable to detect the facelets "out of the box", viewed realistically there are a few requirements to achieve a reliable facelet recognition with this method due to the different lightning conditions, webcams, cube colors etc.

- Use a cube where the facelets are well separated and the gaps between the stickers is rather big than small.

- Calibrate the parameters of the filters at the beginning of a session.

- Sometimes disabling automatic white balance and automatic brightness in the configuration software of your webcam and adjusting the settings manually gives better results.

Run the script computer_vision.py to use the webcam and the GUI interface.

|

|

|---|

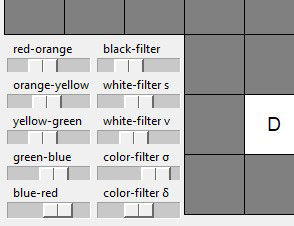

You can adjust the parameters of the filters with the sliders of the GUI interface and observe the image of the corresponding preview window. Moving the sliders from left to right increases the filter strength so you should start with the sliders left and move them to the right as far as possible.

|

|

|---|---|

|

In the upper left picture the black-filter slider is far to the left, in he upper right picture it is too far to the right because the lower facelets do not pass the filter any more. The picture on the left shows a good calibration and gives a good facelet separation. |

|

|

|---|---|

|

In the upper left picture both white-filter sliders are on the left, the white facelet (see the color picture above) passes the filter but also a lot of other stuff. In the upper right picture the sliders are pushed too far to the right - the white facelet does not pass any more. The picture on the left shows a good calibration of the white-filter. |

|

The color-filter works similar. All colored facelets must pass through - but not necessarily the white facelets. So the picture on the left side gives a valid calibration though the white facelet in the upper left corner is not fully recognized. |

|---|

|

The facelets here are all recognized and the colors are assigned correctly. The default positions of the color-sliders work quite well. Usually only the red-orange slider has to be used for calibration if red or orange are not assigned correctly. |

|---|

With the "Webcam import" button you can transfer the colors into the GUI client and then rotate the cube to analyze another cube face.

© 2019 ![]() Herbert

Kociemba

Herbert

Kociemba